Comprehension Debt, the Hidden Tax of AI Coding

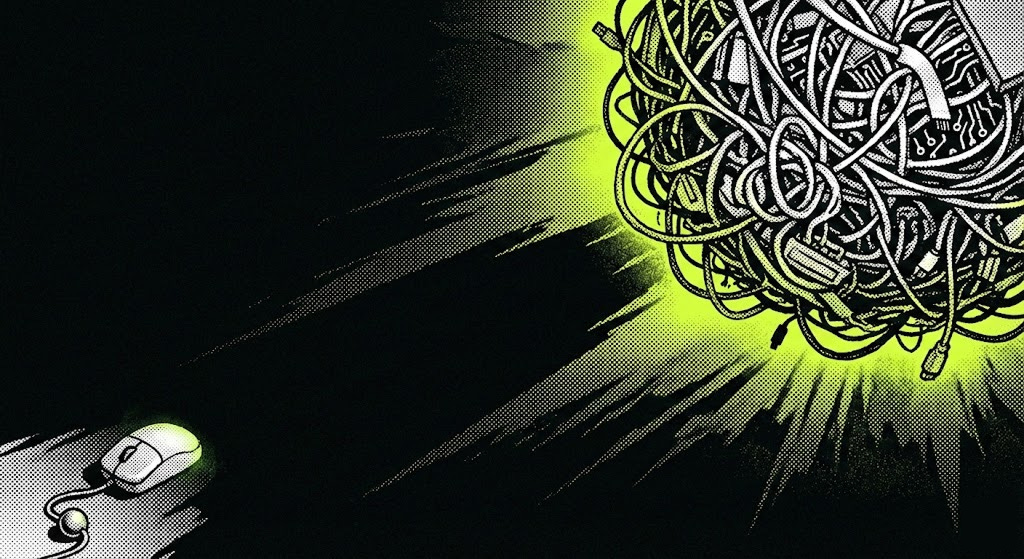

AI coding tools make you faster than ever. They also make you a stranger in your own codebase. That gap between what your code does and what you actually understand—that's comprehension debt. And it c

AI coding changed the game completely. Anyone can vibe code their ideas into working apps now.

But for experienced devs and engineers who can “talk” code, who have systemic thinking, it creates this superpower-like feeling. Finally. The tools match the ambition.

Engineers with the builder’s “disease” always had this problem. Ideas/effort ratio. A scarce resource problem. Time. The time needed to go from idea to a palpable outcome. The time needed to put thoughts into code.

That was the grunt work. The bottleneck. The thing that killed most ideas before they got started.

Now that game is completely different.

AI takes over the grunt work and the dev steps up as architect. As orchestrator. You have these relentless workers that just wait for your input. Your clear directions on what to build and how to build it.

Thankfully, the WHY remains human (still).

So you keep building. And building. And building.

After a while, you get this uneasy feeling. A feeling that’s somewhat new but also familiar. Something’s off. You can’t put your finger on it. And you can’t shake it off either.

Tests pass. Features ship. The product works. So what is it then?

But when you go through your codebase, you feel like a stranger. Because ….you kinda are.

That feeling has a name. Comprehension debt. The hidden tax of AI coding.

The Gap Nobody Talks About

Technical debt is when you take shortcuts that create future work. Everyone knows this one.

Comprehension debt is different. Your code works perfectly. You just don’t understand it. The gap between what your codebase does and what you actually know about it. That gap.

Before AI tools, it stayed small. You wrote every line. You understood every decision. Even messy code lived rent-free in your head somewhere.

Now Claude generates 350 lines of complex logic in seconds. Tests pass. You commit. Ship. Done. Next, please.

Three months later, something breaks. You open that file — “Who wrote this?”. Because it wasn’t you, clearly.

How It Sneaks Up On You

The AI builds a temporary mental model of your codebase to complete its task. It sees connections, understands dependencies, and know why it made certain choices. Then it finishes. And discards all of that knowledge.

Gone. Evaporated. Like it never existed (unless you specifically tell it to keep it).

So if you don’t do the work to rebuild that understanding in your own head, your mental model drifts. Slowly at first. Then faster.

Every AI-assisted change you don’t fully review. Every “this looks fine, ship it” moment. Every test that passes without understanding why.

It compounds. The code works. You just can’t explain how.

The Doom Loop

This is where it gets ugly.

Something breaks in the code you don’t fully understand. So you ask the AI to fix it. The AI patches it based on its temporary understanding and the (limited) knowledge and context it currently has. You commit the fix without fully grasping it.

Now you understand the code even less.

Next bug. Same cycle. Deeper in the rabbit hole.

Eventually, you hit a problem that AI can’t solve. Maybe a subtle edge case. Maybe a fundamental architecture issue that AI can’t decide upon without your directions.

Either way, you’re forced to manually debug code that feels alien.

I know experienced devs who got stuck in a loop for days. Trapped in their own codebase. I’m one of them. It happened to me too.

The Paradox

Now for the uncomfortable truth.

The same thing that makes you faster is making you weaker. Slowly. Imperceptibly.

As a dev, you were never paid to type code. You were paid to think. AI handles the typing better than you ever could or ever will.

But if you let it handle the thinking too, what exactly do you contribute?

That superpower feeling is real. But superpowers have costs.

Superman has kryptonite. Yours is the code you stopped understanding last week, or 2 months ago.

False Confidence

What makes comprehension debt dangerous is how invisible it is.

Tests pass. Code review clears. Users are happy. The product is being used. Every signal says you’re doing great.

But those signals measure output. Not understanding.

You can have a perfectly functioning codebase that you or your team don’t actually comprehend. That’s fine until it isn’t.

Then the debt comes due. All at once.

Staying Out of the Hole

There’s no hack here. No clever prompt that solves this. The price is attention.

Review everything. Every single change the AI makes. Don’t skim. Read. Understand. Yes, it’s slower. That’s the point. Speed got you into this hole. Deliberate slowness gets you out.

But “review everything” is advice you’ve heard before. Here’s what it actually looks like in practice:

Make the AI explain itself. Don’t just accept code. Before you commit anything substantial, ask: “Walk me through this logic. Why this approach? What are the alternatives? What are the tradeoffs?”

It forces you to engage with reasoning, not just the output.

A good explanation feels like a conversation with a senior dev who made deliberate choices. A bad one is vague hand-waving. Responses like “this is a common pattern” or “this handles edge cases.” When you get the bad version, push back. “Which edge cases? Why this pattern specifically?” If the AI can’t articulate it clearly, that’s a signal you need to understand it yourself before it goes in.

Refactor AI code by hand. Not to improve it. Just to understand it.

Pick a file the AI wrote last month. Rewrite it yourself. You’ll be surprised how often you discover logic you’d completely forgotten existed. Dependencies you didn’t realise were there. Decisions that made sense in a context you’ve since lost.

This is slow. Feels wasteful (especially these days). Do it anyway. The goal isn’t better code. It’s rebuilding mental models that have drifted away.

Document intent, not mechanics. Comments that say “loops through array” are worthless. The golden comments are something like “we process the oldest items first because the billing system assumes chronological order”.

When you force yourself to write why something exists, you have to understand it first. If you can’t write the why, you’ve found comprehension debt in real-time.

The Compound Interest Problem

Here’s what makes this worth the effort.

Comprehension debt compounds negatively. Every file you don’t understand makes the next problem harder to debug. The context you’re missing connects to other context you’re missing. The holes link up.

But understanding compounds too. The developer who maintains mental models can navigate problems the speed-runner can’t even see. They spot architectural issues before they become expensive. They know which “quick fix” will create three more bugs downstream.

AI makes you faster at producing code. Understanding makes you faster at everything else. Debugging. Planning. Communicating. Deciding what to build next.

The developers treating AI like a magic box will hit walls. The ones treating it like a very fast junior dev who needs supervision will keep climbing.

The Real Question

I’m bullish on these tools. Use them daily. Can’t imagine going back.

But after a year of coding almost exclusively with AI, I spent two days last month debugging something that should’ve taken an hour. The code was mine (technically). I’d committed it. Approved the PR. Shipped it.

I just didn’t understand it anymore.

That’s the honest answer. A year in and I don’t have this figured out yet. The tactics I listed help, but I’m still finding the balance. Still noticing which projects I can navigate blind and which ones need me to pause and comprehend.

The ratio is shifting in a direction I don’t love.

The AI won’t remember why it made those choices.

You have to.